Designing Agentic AI: A Practical Architecture Map for Conversational UX Designers

Part of the series of Agentic Experience Design, published every Monday.

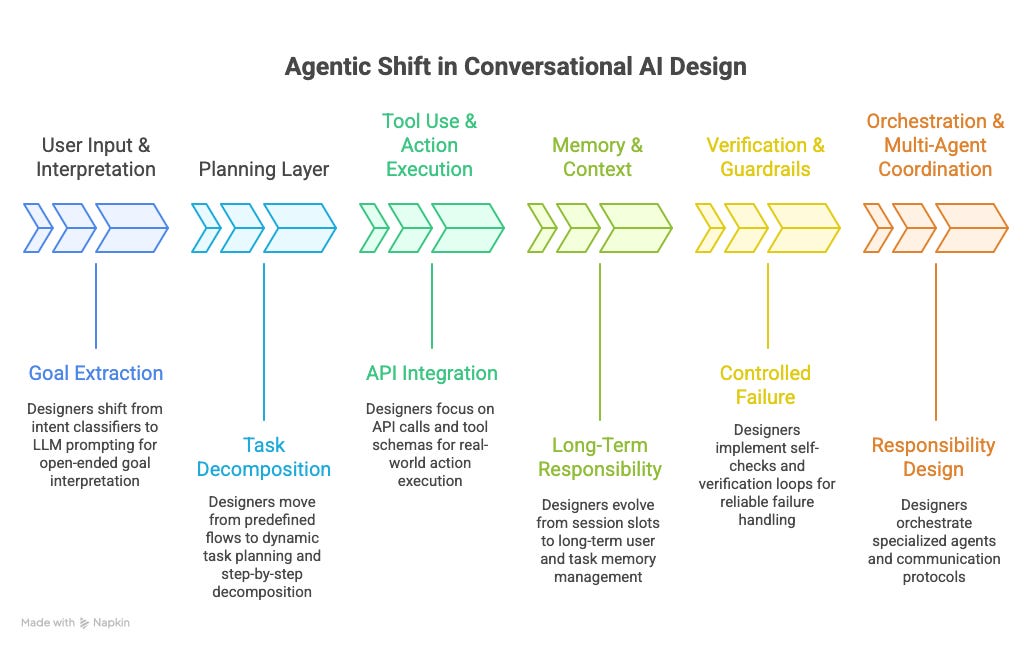

In this article, I argue that agentic AI does not replace conversational design, but moves it upstream into architecture, control, and responsibility. Once systems can plan, use tools, remember information, verify outcomes, and coordinate across agents, the design object is no longer only the conversation. It becomes the system’s behavior: where decisions happen, what the system is allowed to do, and how it fails safely.

Across the piece, I offer a practical architecture map for conversational UX designers, covering the shift from intents to goals, flows to plans, messages to actions, session state to memory, fallbacks to verification, and dialog managers to orchestration.

Readers will come away with a clearer understanding of how their existing skills translate into agentic AI, and which new capabilities they need to develop to design trustworthy behavior.

Agentic AI doesn’t replace conversational design.

It moves it upstream — into architecture, control, and responsibility.

You still design language, tone, and interaction quality. But in agentic systems, those are downstream outputs of something more fundamental: where decisions happen, what the system is allowed to do, and how it fails safely.

Here’s your practical component map.

1) User input and interpretation

From intent detection → goal framing

In classic NLU, we trained the system to label what the user said. In agentic systems, the system has to translate messy input into an actionable goal: an objective, constraints, and missing info. The key design question becomes: what counts as “enough clarity” to proceed safely?

What changes

Users give partial info, change their mind, and mix multiple goals in one message.

The system must decide: clarify, assume safely, or refuse.

What to learn

Goal extraction prompting + structured outputs (JSON / schemas)

Lightweight semantic parsing (not full intent modeling)

What to design

Goal-framing prompts (objective + constraints + unknowns)

Clarification strategy: when to ask vs when to act

👉 You stop training intents and start shaping interpretation rules.

2) Planning layer

From dialog flows → task decomposition

Flows assume a known path. Planning assumes uncertainty and revision. Agentic systems need to generate steps, adapt them when tools fail, and re-plan when the user adds new constraints. Planning isn’t “happy path + fallback” anymore — it’s constraints + stop conditions + recovery.

What changes

Plans are dynamic, can drift, and must be revisable.

Failures are expected, so planning must anticipate them.

What to learn

Planner prompts (task lists / step graphs)

Basic orchestration concepts (lightweight is enough)

What to design

Planning constraints (what must always be checked first)

Stop conditions (“when is the plan good enough to execute?”)

👉 You’re no longer drawing flows — you’re designing how plans are allowed to emerge.

3) Tool use and action execution

From responses → real-world effects

This is where conversational design meets systems thinking. Once the assistant can call APIs, update records, or trigger workflows, “being helpful” must be bounded by permissions, confirmation logic, and verifiable outcomes. The UX challenge becomes: how do we make action safe, legible, and reversible?

What changes

Mistakes now have consequences (not just awkward phrasing).

The system needs permissioning + action transparency.

What to learn

Tool schemas (inputs/outputs/error states)

Tool-use prompting patterns + basic API literacy

What to design

Tool boundaries (what the agent may / may not do)

Confirmation policy (confirm-before vs confirm-after, per risk tier)

👉 This is where “conversation quality” becomes “operational responsibility.”

4) Memory and context

From session state → long-term responsibility

Slots and session variables were mostly technical plumbing. Memory in agentic systems is product policy. You’re deciding what gets remembered, for how long, and whether retrieval should override the model’s tendency to “fill gaps.” This is also where over-personalization creeps in if you’re not careful.

What changes

Context isn’t just “helpful” — it can become harmful or creepy.

Stale memory can be worse than no memory.

What to learn

RAG basics + retrieval concepts (vector stores, conceptually)

Memory scoping strategies (short-term vs long-term)

What to design

Memory policy (what to store, why, and for how long)

Forgetting/expiration + user control patterns

👉 Memory is no longer technical debt — it’s a design choice with ethical weight.

5) Verification and guardrails

From fallbacks → controlled failure

Confidence thresholds don’t protect you from confident nonsense. Reliability comes from explicit verification moments: before acting, after acting, and before claiming something is true. Failure shouldn’t be hidden behind polite language — it should be controlled, visible, and recoverable.

What changes

The system must prove it’s right (or pause) before it acts.

“I did it” must be tied to tool confirmation, not vibes.

What to learn

Verification prompting + simple evaluation heuristics

Rule-based constraints around risky outputs (when needed)

What to design

Verification checkpoints (pre-action, post-action, pre-message)

Graceful degradation paths + escalation logic

👉 Reliability is designed, not tuned.

6) Orchestration and multi-agent coordination

From dialog manager → responsibility design

As systems scale, “one bot does everything” becomes a blob. Multi-agent patterns can help — but only if they clarify roles, decision rights, and escalation. Otherwise you just get more latency and new failure modes.

What changes

Roles split: planner, executor, verifier, policy layer.

Hand-offs become the new UX.

What to learn

Basic multi-agent patterns (supervisor + specialists)

Role-based prompting + message passing concepts

What to design

Decision rights (who decides vs who executes)

Overlap prevention + escalation pathways (machine↔machine, machine↔human)

👉 This is organizational design — applied to machines.

A simple re-skilling map

To transition into this new era, we have to swap our old toolset for a new architectural framework:

Understanding: We are moving from matching Intents (predicting words) to framing Goals (defining outcomes).

Control: We are trading static Flows for dynamic Plans—and designing the Latency experience that comes with them.

Output: Our focus is shifting from crafting Messages to defining Actions and tool-use boundaries.

Context: We’ve evolved from managing temporary Slots to architecting long-term Memory and retrieval logic.

Safety: We are moving away from simple Fallbacks toward complex Verification loops and Human-in-the-loop handoffs.

Scale: We are no longer managing a single Dialog Manager; we are Orchestrating fleets of specialized agents.

The real takeaway

Agentic AI doesn’t demand that designers become engineers.

It demands that designers:

understand where decisions happen

define boundaries and responsibilities

and design for failure, not perfection

The technology stack matters — but the architectural thinking matters more.

Agentic AI isn’t about better conversations.

It’s about designing trustworthy behavior.

Your turn

If you design conversational AI today:

Which shift feels hardest right now — goals, planning, tools, memory, or verification?

Where are NLU-style architectures already breaking for you?

👇 Share your perspective in the comments.

If this reframed how you think about conversational design,

like, share, or forward it to someone still treating this as “just prompt writing.”